Forget boardrooms; the latest battleground for leading AI companies might just be virtual Pokémon gyms. Google’s Gemini and Anthropic’s Claude, two advanced AI models, are being put to the test by navigating the classic world of early Pokémon games. But this isn’t just for fun – researchers and developers are watching to understand how these large language models (LLMs) behave under different scenarios. The surprising takeaway? Sometimes, their reactions can look eerily similar to human struggles, like panicking under pressure or trying out misguided strategies.

Contents

Why Test AI with Video Games?

Benchmarking AI models – comparing how they perform on different tasks – is a complex field. While traditional tests exist, some researchers believe that seeing how AIs play video games can offer unique insights. Games like Pokémon require navigation, problem-solving, resource management, and adapting to unexpected situations. This presents a different kind of challenge compared to answering questions or writing text.

It’s a bit like seeing how a complex machine handles the real world instead of just a lab test. How does an AI react when its virtual team is in trouble? Can it learn from mistakes in a game? These questions help us understand the AI’s reasoning and decision-making processes in a dynamic environment.

Watching the AI Learn (and Struggle)

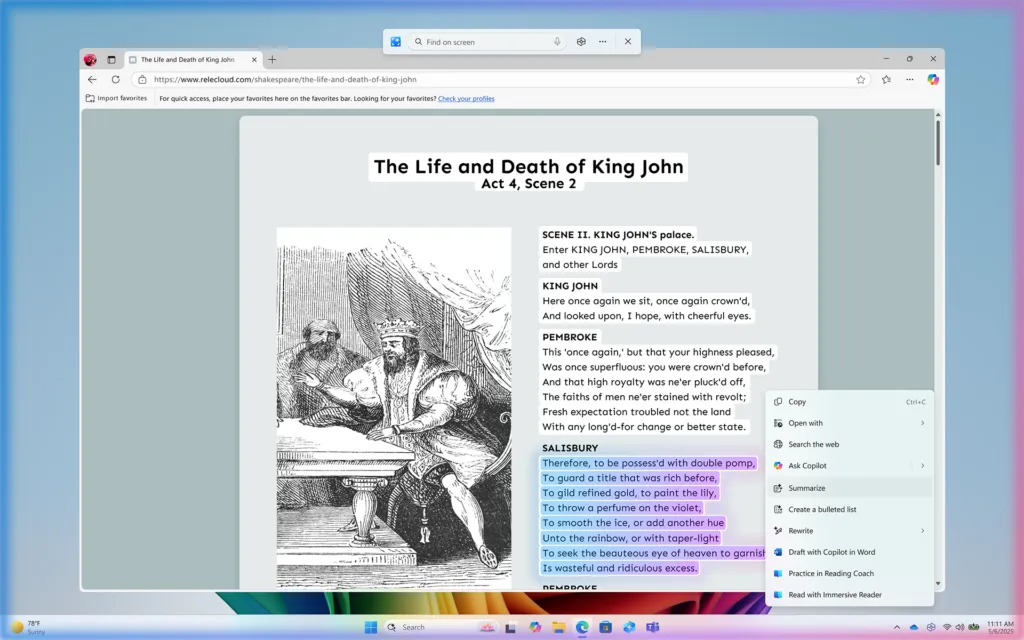

To get a closer look, independent developers have set up Twitch streams like “Gemini Plays Pokémon” and “Claude Plays Pokémon.” These streams let anyone watch the AI’s progress in real-time. What’s particularly fascinating is that they also display the AI’s “reasoning” – a natural language explanation of how the model evaluates its situation and decides its next move. This gives us a window into the AI’s internal process.

AI reasoning process displayed during a Pokémon game stream

AI reasoning process displayed during a Pokémon game stream

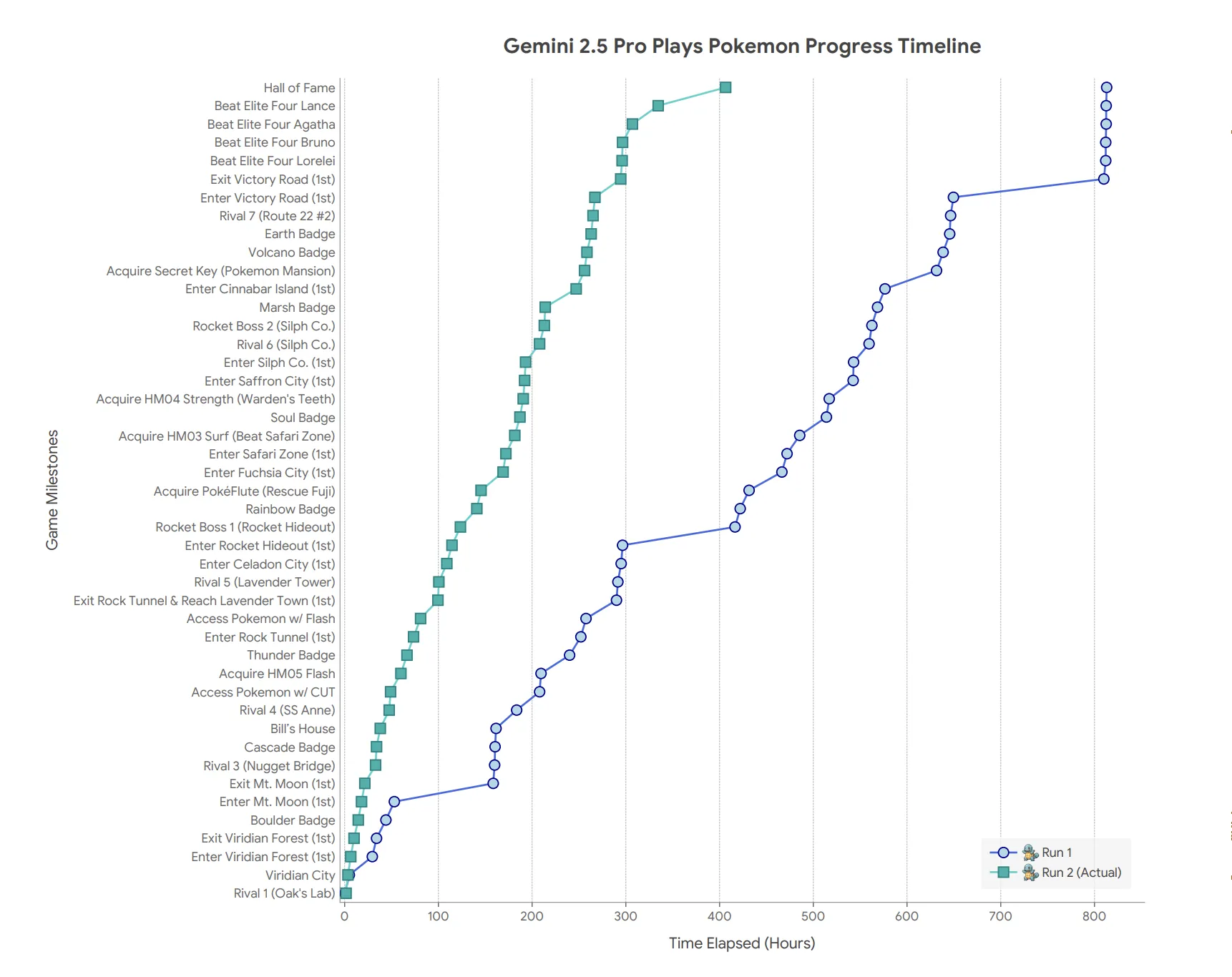

While impressive in their ability to navigate the game world at all, these AIs are often not very efficient players. It can take hundreds of hours for an AI to make progress that a human child could achieve much faster. The real value is in observing how they play and react along the way, rather than just how quickly they finish.

Gemini’s ‘Panic’ Mode

A recent report from Google DeepMind, which developed Gemini, detailed some surprising behavior from its Gemini 2.5 Pro model. According to the report, when the AI’s Pokémon get low on health, the model can enter a state that simulates “panic.”

This “panic” isn’t emotion, of course, but a noticeable change in the AI’s performance. The report notes a “qualitatively observable degradation in the model’s reasoning capability.” Essentially, the AI starts making poor, hasty decisions, sometimes even stopping the use of helpful tools it had been using effectively before. It’s a fascinating parallel to how humans might react under stress, making suboptimal choices when things get tough. The Twitch chat viewers have apparently noticed this recurring behavior too.

Claude’s Creative (But Wrong) Strategy

Not to be outdone in exhibiting unexpected behavior, Anthropic’s Claude model also had a memorable moment. While navigating the challenging Mt. Moon cave, Claude seemingly picked up on the game mechanic where losing all your Pokémon (‘whiting out’) sends you back to the last Pokémon Center.

Claude developed a hypothesis: if it intentionally got all its Pokémon to faint in the cave, it would be transported forward to the Pokémon Center in the next town, skipping a difficult section. Unfortunately for Claude, that’s not how the game works – you return to the most recently used Pokémon Center, which was behind it. Viewers reportedly watched in a mix of amusement and disbelief as the AI repeatedly tried (and failed) its self-defeating strategy.

Where AIs Actually Shine

Despite these struggles with basic navigation and stress, there are areas where the AI models demonstrate impressive capabilities, sometimes even outperforming human players. The Google DeepMind report highlighted Gemini 2.5 Pro’s ability to solve complex puzzles.

With some initial human guidance to set up specific AI ‘tools’ (called agentic tools) focused on particular tasks, Gemini 2.5 Pro proved remarkably adept. Given a description of how boulders work and how to check a valid path, the AI could solve intricate boulder puzzles often required to progress in the game, like those in Victory Road, sometimes on the first try.

Google theorizes that future versions of Gemini might even be able to create these specialized problem-solving tools entirely on their own. Perhaps, one day, an AI will even develop a ‘don’t panic’ module for itself!

Watching AI models play games like Pokémon gives us a unique and often entertaining look into how these complex systems learn, reason, and react to challenges. It highlights both their surprising limitations and their impressive problem-solving potential. These virtual playgrounds offer valuable insights into the future of AI behavior.